NVIDIA Honors Americas Partners Advancing Agentic and Physical AIon March 19, 2025 at 3:00 pm

NVIDIA this week recognized 14 partners leading the way across the Americas for their work advancing agentic and physical AI across industries. The 2025 Americas NVIDIA Partner Network awards — announced at the GTC 2025 global AI conference — represent key efforts by industry leaders to help customers become experts in using AI to solve

Read ArticleNVIDIA this week recognized 14 partners leading the way across the Americas for their work advancing agentic and physical AI across industries. The 2025 Americas NVIDIA Partner Network awards — announced at the GTC 2025 global AI conference — represent key efforts by industry leaders to help customers become experts in using AI to solve

Read Article

NVIDIA this week recognized 14 partners leading the way across the Americas for their work advancing agentic and physical AI across industries.

The 2025 Americas NVIDIA Partner Network awards — announced at the GTC 2025 global AI conference — represent key efforts by industry leaders to help customers become experts in using AI to solve many of today’s greatest challenges. The awards honor the diverse contributions of NPN members fostering AI-driven innovation and growth.

This year, NPN introduced three new award categories that reflect how AI is driving economic growth and opportunities, including:

- Trailblazer, which honors a visionary partner spearheading AI adoption and setting new industry standards.

- Rising Star, which celebrates an emerging talent helping industries harness AI to drive transformation.

- Innovation, which recognizes a partner that’s demonstrated exceptional creativity and forward thinking.

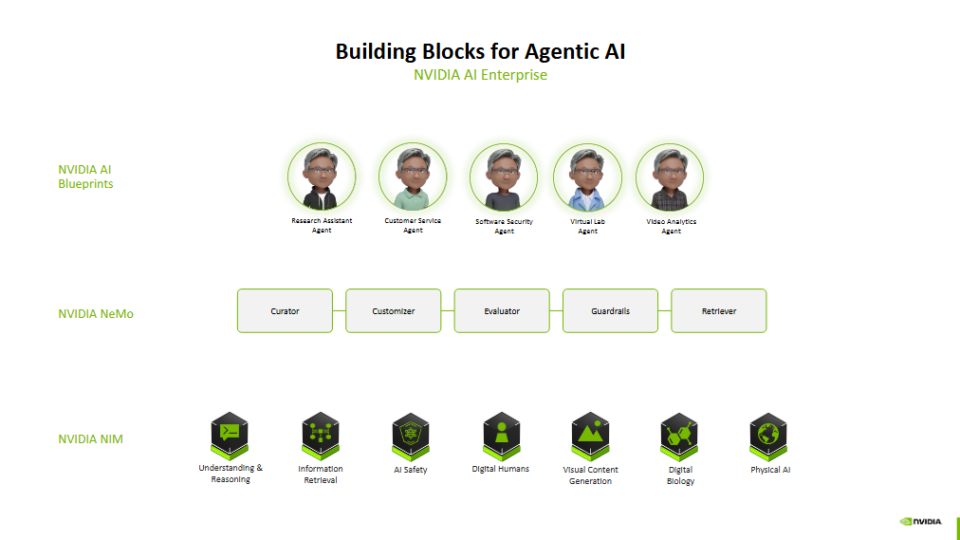

This year’s NPN ecosystem winners have helped companies across industries use AI to adapt to new challenges and prioritize energy-efficient accelerated computing. NPN partners help customers implement a broad range of AI technologies, including NVIDIA-accelerated AI factories, as well as large language models and generative AI chatbots, to transform business operations.

The 2025 NPN award winners for the Americas are:

- Global Consulting Partner of the Year — Accenture is recognized for its impact and depth of engineering with its AI Refinery platform for industries, simulation and robotics, marketing and sovereignty, which helps organizations enhance innovation and growth with custom-built approaches to AI-driven enterprise reinvention.

- Trailblazer Partner of the Year — Advizex is recognized for its commitment to driving innovation in AI and high-performance computing, helping industries like healthcare, manufacturing, retail and government seamlessly integrate advanced AI technologies into existing business frameworks. This enables organizations to achieve significant operations efficiencies, enhanced decision-making, and accelerated digital transformation.

- Rising Star Partner of the Year — AHEAD is recognized for its leadership, technical expertise and deployment of NVIDIA software, NVIDIA DGX systems, NVIDIA HGX and networking technologies to advance AI, benefitting customers across healthcare, financial services, life sciences and higher education.

- Networking Partner of the Year — Computacenter is recognized for advancing high-performance computing and data centers with NVIDIA networking technologies. The company achieved this by using the NVIDIA AI Enterprise software platform, DGX platforms and NVIDIA networking to drive innovation and growth throughout industries with efficient, accelerated data centers.

- Solution Integration Partner of the Year — EXXACT is recognized for its efforts in helping research institutions and businesses tap into generative AI, large language models and high-performance computing. The company harnesses NVIDIA GPUs and networking technologies to deliver powerful computing platforms that accelerate innovation and tackle complex computational challenges across various industries.

- Enterprise Partner of the Year — World Wide Technology (WWT) is recognized for its leadership in advancing AI adoption of customers across industry verticals worldwide. The company expanded its end-to-end AI capabilities by integrating NVIDIA Blueprints into its AI Proving Ground and has made a $500 million commitment to AI development over three years to help speed enterprise generative AI deployments.

- Software Partner of the Year — Mark III is recognized for the work of its cross-functional team spanning data scientists, developers, 3D artists, systems engineers, and HPC and AI architects, as well as its close collaborations with enterprises and institutions, to deploy NVIDIA software, including NVIDIA AI Enterprise and NVIDIA Omniverse, across industries. These efforts have helped many customers build software-powered pipelines and data flywheels with machine learning, generative AI, high-performance computing and digital twins.

- Higher Education Research Partner of the Year — Mark III is recognized for its close engagement with universities, academic institutions and research organizations to cultivate the next generation of leaders across AI, machine learning, generative AI, high-performance computing and digital twins.

- Healthcare Partner of the Year — Lambda is recognized for empowering healthcare and biotech organizations with AI training, fine-tuning and inferencing solutions to speed innovation and drive breakthroughs in AI-driven drug discovery. The company provides AI training, fine-tuning and inferencing solutions at every scale — from individual workstations to comprehensive AI factories — that help healthcare providers seamlessly integrate NVIDIA accelerated computing and software into their infrastructure.

- Financial Services Partner of the Year — WWT is recognized for driving the digital transformation of the world’s largest banks and financial institutions. The company harnesses NVIDIA AI technologies to optimize data management, enhance cybersecurity and deliver transformative generative AI solutions, helping financial services clients navigate rapid technological changes and evolving customer expectations.

- Innovation Partner of the Year — Cambridge Computer is recognized for supporting customers deploying transformative technologies, including NVIDIA Grace Hopper, NVIDIA Blackwell and the NVIDIA Omniverse platform for physical AI.

- Service Delivery Partner of the Year — SoftServe is recognized for its impact in driving enterprise adoption of NVIDIA AI and Omniverse with custom NVIDIA Blueprints that tap into NVIDIA NIM microservices and NVIDIA NeMo and Riva software. SoftServe helps customers create generative AI services for industries spanning manufacturing, retail, financial services, auto, healthcare and life sciences.

- Distribution Partner of the Year — TD SYNNEX has been recognized for the second consecutive year for supporting customers in accelerating AI growth through rapid delivery of NVIDIA accelerated computing and software, as part of its Destination AI initiative.

- Rising Star Consulting Partner of the Year — Tata Consultancy Services (TCS) is recognized for its growth and commitment to providing industry-specific solutions that help customers adopt AI faster and at scale. Through its recently launched business unit and center of excellence built on NVIDIA AI Enterprise and Omniverse, TCS is poised to accelerate adoption of agentic AI and physical AI solutions to speed innovation for customers worldwide.

- Canadian Partner of the Year — Hypertec is recognized for its advancement of high-performance computing and generative AI across Canada. The company has employed the full-stack NVIDIA platform to accelerate AI for financial services, higher education and research.

- Public Sector Partner of the Year — Government Acquisitions (GAI) is recognized for its rapid AI deployment and robust customer relationships, helping serve the unique needs of the federal government by adding AI to operations to improve public safety and efficiency.

Learn more about the NPN program.

NVIDIA this week recognized 14 partners leading the way across the Americas for their work advancing agentic and physical AI across industries. The 2025 Americas NVIDIA Partner Network awards — announced at the GTC 2025 global AI conference — represent key efforts by industry leaders to help customers become experts in using AI to solve

Read Article