The Dream Life Awaits: Play ‘inZOI’ on GeForce NOW Anytime, Anywhereon March 27, 2025 at 1:00 pm

A new resident is moving into the cloud — KRAFTON’s inZOI joins the 2,000+ games in the GeForce NOW cloud gaming library. Plus, members can get ready for an exclusive sneak peek as the Sunderfolk First Look Demo comes to the cloud. The demo is exclusively available for players on GeForce NOW until April 7,

Read ArticleA new resident is moving into the cloud — KRAFTON’s inZOI joins the 2,000+ games in the GeForce NOW cloud gaming library. Plus, members can get ready for an exclusive sneak peek as the Sunderfolk First Look Demo comes to the cloud. The demo is exclusively available for players on GeForce NOW until April 7,

Read Article

A new resident is moving into the cloud — KRAFTON’s inZOI joins the 2,000+ games in the GeForce NOW cloud gaming library.

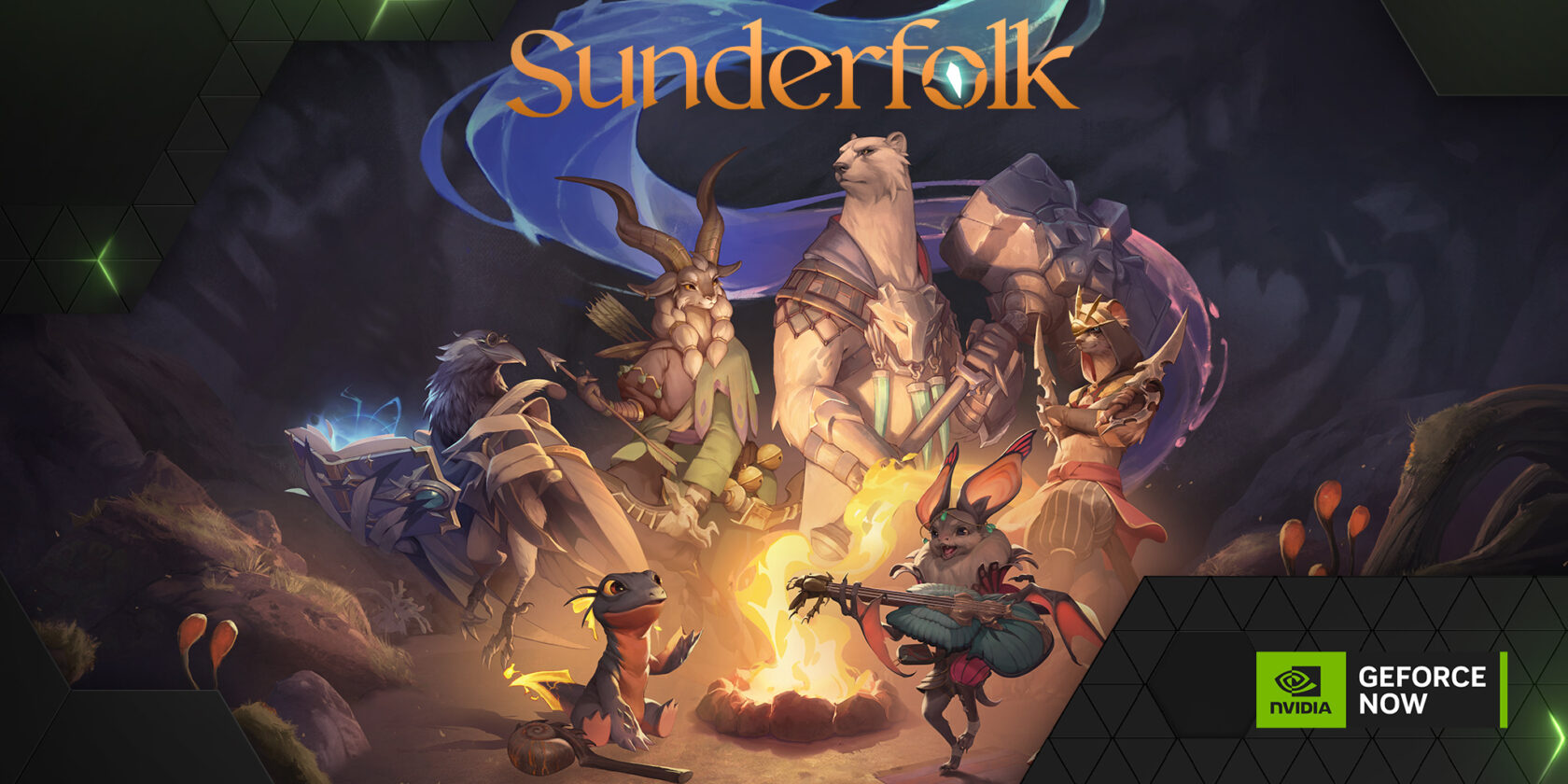

Plus, members can get ready for an exclusive sneak peek as the Sunderfolk First Look Demo comes to the cloud. The demo is exclusively available for players on GeForce NOW until April 7, including Performance and Ultimate members as well as free users.

And explore the world of Atomfall — part of 12 games joining the cloud this week.

Cloud of Possibilities

In inZOI — a groundbreaking life simulation game by Krafton that pushes the genre’s boundaries — take on the role of an intern at AR COMPANY, managing virtual beings called “Zois” in a simulated city.

The game features over 400 mental elements influencing Zois’ behaviors. Experience the game’s dynamic weather system, open-world environments inspired by real locations and cinematic cut scenes for key life events — and even create in-game objects. inZOI lets players craft unique stories and live out their dreams in a meticulously designed virtual world.

Dive into the world of Zois without the need for high-end hardware. Members can manage their virtual homes, customize characters and explore the game’s dynamic environments from various devices, streaming its detailed graphics and complex simulations with ease.

A Magical Gateway

Sunderfolk’s First Look Demo has arrived on GeForce NOW, offering a tantalizing look into the magical realm of the Sunderlands. Designed as a TV-first experience, this shared-turn-based tactical role-playing game (RPG) enables using a mobile phone as the gameplay controller. Up to four players can gather around the big screen and embark on a journey filled with strategic battles.

This second-screen approach keeps players engaged in real time, adding new layers of immersion. With all six unique character classes unlocked from the start, players can experience the early hours of the game, experimenting with different team compositions and tactics to overcome the challenges that await.

Accessing the demo is a breeze — head to the GeForce NOW app, select Sunderfolk and jump right in. Explore the Sunderlands, engage in flexible turn-based combat and help rebuild the village of Arden to get a taste of the full game’s depth and camaraderie.

Gather the gaming squad, grab a phone and prepare to write a completely new legend in this RPG adventure. The First Look Demo is only available on GeForce NOW, where members can enjoy high-quality graphics and seamless gameplay on their phones and tablets, along with the innovative mobile-as-controller mechanic that makes Sunderfolk’s couch co-op experience so engaging.

Epic Adventures Await

Blending folk horror and intense combat, Atomfall is a survival-action game set in an alternate 1960s Britain, where the Windscale nuclear disaster has left Northern England a radioactive wasteland. Players explore eerie open zones filled with mutated creatures, cultists and Cold War mysteries while scavenging resources, crafting weapons and uncovering the truth behind the disaster. GeForce NOW members can stream it today across their devices of choice.

Look for the following games available to stream in the cloud this week:

- Sunderfolk First Look Demo (New release, March 25)

- Atomfall (New release on Steam and Xbox available on PC Game Pass, March 27)

- The First Berserker: Khazan (New release on Steam, March 27)

- inZOI (New release on Steam, March 27)

- Beholder (Epic Games Store)

- Bus Simulator 21 (Epic Games Store)

- Galacticare (Xbox, available on PC Game Pass)

- Half-Life 2 RTX Demo (Steam)

- The Legend of Heroes: Trails through Daybreak II (Steam)

- One Lonely Outpost (Xbox, available on PC Game Pass)

- Psychonauts (Xbox, available on PC Game Pass)

- Undying (Epic Games Store)

What are you planning to play this weekend? Let us know on X or in the comments below.

Which game do you think deserves a sequel? ➡️

— 🌩️ NVIDIA GeForce NOW (@NVIDIAGFN) March 26, 2025

A new resident is moving into the cloud — KRAFTON’s inZOI joins the 2,000+ games in the GeForce NOW cloud gaming library. Plus, members can get ready for an exclusive sneak peek as the Sunderfolk First Look Demo comes to the cloud. The demo is exclusively available for players on GeForce NOW until April 7,

Read Article